Hi Jay,

Regarding the WS2812 LED: I know that timing is quite critical here, thank you for the pointer to the Cypress code. For my application it is sufficient to just use the workaround with switching to full-speed 48 MHz clock as the LED is used only when starting up.

Understanding the µC and mC is quite easy. “C” means Coulomb, which is a unit of electric charge. 1 Coulomb is the charge that a current of 1 Ampere switched on over a period of 1 second delivers, so basically it means current * time. You can think of this in terms of “amount of electrons”, with 1 Coulomb being 6241509074460762607 electrons (= 6.24 * 10^18 = 6.24e18 electrons).

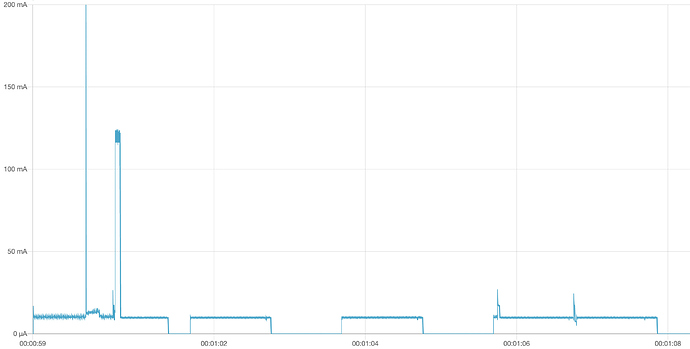

With changing current this evolves to the integral of current over time, so it’s the area under the current curve in the diagrams (or, the averaged current multiplied by the time). If, as in your first example, a processing cycle needs 10 mA over 40 ms, this is an energy of 0.010 A * 0.040 s = 0.0004 C = 400 µC. This matches the values shown in the grey box in the diagram because the current is mainly constantly at 10 mA.

The energy specified for a battery (rechargeable or not) is usually given in mAh, which is the same dimension but in a different unit than Coulombs. 1 mAh = 1 mA * 1 h = 0.001 A * 3600 s = 3,6 C. So if you have a off-the-shelf LiSOCl₂ battery with 2600 mAh, this would be 9.36 C. Simply calculated, this would be sufficient for 2,340,000 cycles, but of course this ignores other factors such as self discharge and aging of the battery.

We’re talking of constant voltage here. If we take the voltage into consideration, we would have Watts instead of Amperes (1 W = 1 V * 1 A). Together with the time we get Wattseconds, or Joules

(1 Ws = 1 V * 1 As = 1 V * 1 C = 1 J) as a unit of energy. So the 2600 mAh battery which has 3.6 Volts holds an energy of 9,36 Wh = 33,696 Ws ≈ 33.7 kJ.

But as long as the voltage is constant, electric charge and energy are proportional.

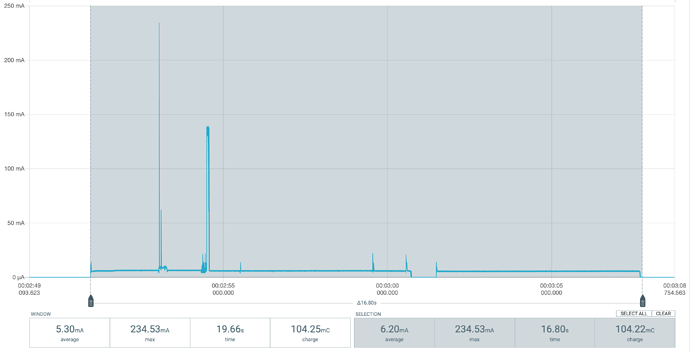

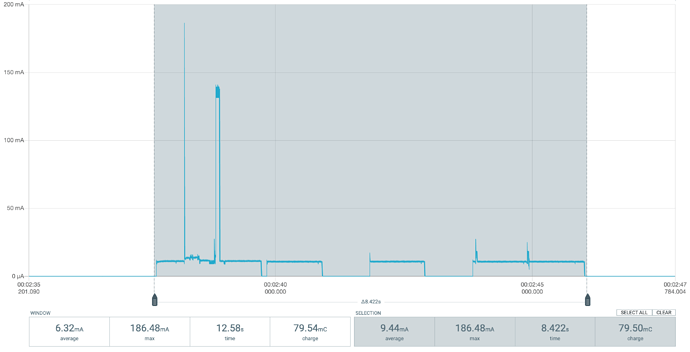

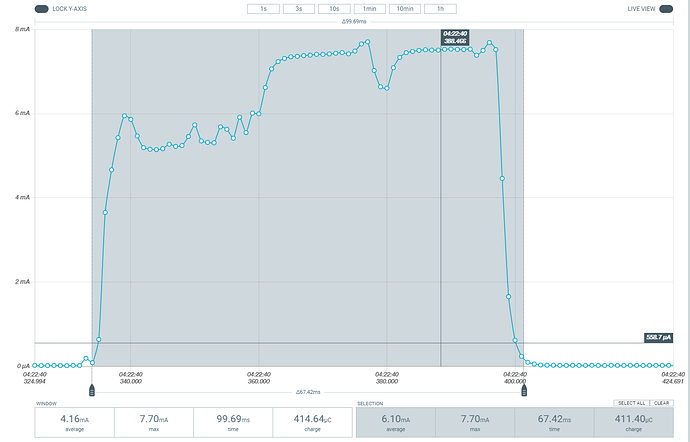

When comparing the two diagrams you sent, the second one with reduced clock frequency shows an average current of 6.1 mA over the course of a cycle which needs 67.42 ms, resulting in a charge of 6.1e-3 A x 67.42e-3 s = 411.4 µC as shown in the grey box. This is actually more than the full-speed version’s 389 µC, so theoretically reducing power has no positive effect here.

This could be a consequence of the fact that power consumption in CMOS circuits occurs when MOSFETs (transistors) are switching state, which is (kind of) proportional to the number of computation steps being executed. So there is little difference in energy consumption when the same calculation is carried out quickly with high power or slowly with low power. It only gets interesting when secondary effects have to be taken into consideration, e.g. operating system tasks or phases when the system is waiting for something external and CPU usage is less than 100% (wait cycles, elapsed time, sensor input, etc.).

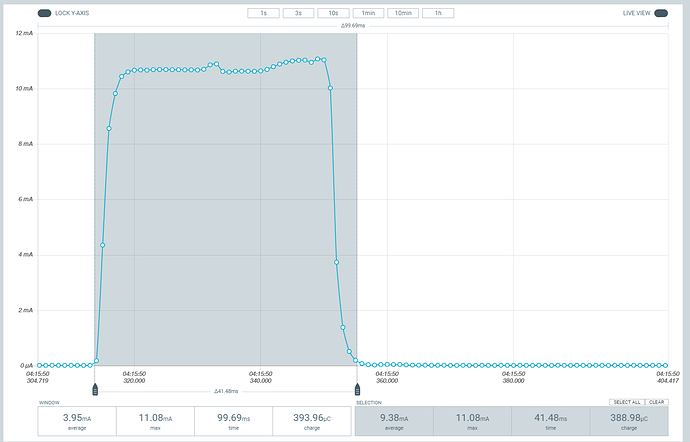

In my case, I am wondering if reducing the clock speed, and therefore current consumption, could give a positive effect as I do not execute relevant amounts of calculation but mainly have to wait for the sensors to come up and do their measurements. Reducing current here could be worthwhile. But on the other hand, if the operating system (i.e. runtime environment and system libraries) behaves completely different depending on the clock setting, this may be counterproductive. I am referring to the lacking sleep phases during the LoRaWAN Tx/Rx phase here.

) works perfectly well in my devices since the first one was deployed two years ago.

) works perfectly well in my devices since the first one was deployed two years ago.